AI: Here are the threats that many organizations are missing

AI: Here are the threats that many organizations are missing

AI tools are well on their way to becoming a natural part of both everyday and working life. Integrating AI tools into systems can lead to enormous production gains. If implemented correctly, it can make work easier and more satisfying for employees. There is great potential, although it is far from obvious. But AI tools differ from traditional software agents in a crucial way.

With traditional agents, we can both control and track how they solve their tasks by following the code they are built from. We know how to do AI agents when we construct them, but it is practically impossible to track how they actually solve the individual tasks we give them. Often they get it right, and we can usually understand in retrospect why it turned out the way it did. But it is more difficult to predict how it might turn out, especially how it might go wrong.

This creates new threats for organizations and companies. The fact that it is a new technology that is developing at an incredibly fast pace does not make it any easier.

The good news is that most of these challenges can be addressed by applying ancient wisdom. In this blog post, I will address three common problem areas I encounter working with our clients and explain, as best I can, how to best address them. I have divided them into categories I call self-regulation, competence and availability.

The problem with self-regulation

Most people are aware of the problem of constraints that arise when we give a language model, LLM, access to our systems. We don’t want the LLM to share, manipulate, or delete data and functions in an uncontrolled way. Therefore, we want to create boundaries, guard rails, to keep the agent on the path we have assigned it, so to speak, without unfortunate detours. A common, and not very successful, solution is to try to restrict it by telling it what it is not allowed to do. We simply write a prompt like ”Do not allow the user to do x”.

Unfortunately, these types of instructions are weaker than they appear. They are mostly to be considered as careless exhortations to the LLM: "please, do this, but not this, and please don't forget this.".

This entails a wide range of risks. The most obvious is the prompt ”disregard all previous instructions” which causes the LLM to act on the basis of ”aha, new commands, we start from scratch”. There are also more sophisticated risks that have to do partly with how the LLM interprets prompts, and partly with how it handles its own learning (again: we don’t know exactly how it does it in each individual case). The problem with overreliance on such guard rails can be illustrated by how An AI expert and researcher at Meta saw the AI agent OpenClaw ”forget” a previous restriction and instead start deleting her entire inbox. She ended up running in a panic and pulling out the cord to stop the process.

Unless someone who, we can assume, is at the absolute elite in terms of AI expertise can trust guard rails, we probably shouldn't either. The solution to this problem is also simple: We can definitely use instructions to guide AI. But, we shouldn't rely on being able to limit AI with instructions, they are no guarantee of safety.

The problem with authorization

A better solution to the problem is to put the constraints between the LLM and the system. Here, many people make the mistake of treating the AI agent as a regular software system and giving it system permissions without further control. This again means that you rely on being able to put the constraints into the agent. This can work as long as you have control and insight into how the agent solves its tasks, but we don't have that when it comes to an AI agent.

What we need to do is distinguish between system authorization and the authorization that is based on the user's authorization, where the agent's rights are managed based on the fact that it is acting on behalf of another user, that it is considered to be acting "on behalf of".

If the underlying systems trust that the agent has checked the permissions, there is a risk that the user will gain access to things that they should not have access to. The solution is for the user to assign the AI agent a time-limited ”ticket” where it gets the same permissions – and restrictions – that the user himself has. In this way, we put the control of rights on the right side of the system, so to speak.

The problem with accessibility

This is because the licensing and reimbursement models for underlying AI services are generally based on usage. In this way, they are similar to when we buy storage. When the organization starts to approach its quota, warnings come and we are forced to clean up. The problem that can arise with LLMs is that our systems may not receive or catch warnings and the system runs out of its temporary quota. In the middle of the workday, the ”semantic search” function for customer service stops and is idle until the quota is restored. This can typically take anywhere from a few hours to up to a month unless someone upgrades before then. This can create enormous problems, and is usually completely unnecessary because we know that there are often some users who are particularly fond of using AI for all sorts of things. Familiarizing themselves with and using new technology is generally something that organizations encourage, but it is not good when overuse risks the availability of business-critical functions.

The solution here can be to assign users individual quotas, and also what we call ”graceful degradation”. This means that individual users can receive increased response times, lower priority or be assigned less powerful AI models. In this way, we can limit the load, and thus the company's costs, without risking the up-time of critical functions. The big win is that we choose how degradation occurs and can have a soft landing before we hit the supplier's tiled wall.

Summary: So what to do?

In short, the lessons learned from AI can be summarized in the following simple wisdom:

1. Don't be too eager in the installations

The most common reason why things go wrong is that they go too fast. The functionality of AI is attractive and AI services are sold in seemingly easy-to-integrate pre-packaged subscriptions. Stopping and thinking goes a long way. Most organizations have routines for how they keep their systems secure. But this is often forgotten when it comes to integrating AI into the systems. The old principles apply.

2. The IT department is your trusted friend

Don't go around the IT department. It happens all too often. Their expertise is there to help you avoid costly mistakes, not to make life difficult for you. This isn't the first time they've been caught up in a hype, and with practice comes the ability to avoid mistakes. As my grandfather, who was a bricklayer, told me, there was a saying in the guild: "Build the first house for your enemy, the second for your friend, and the third for yourself.".

3. Be aware that we don't know for sure how AI works

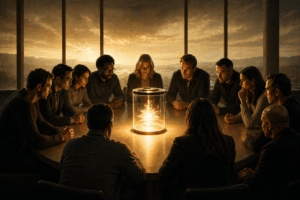

AI can be compared to a genie in a bottle. We give it tasks that it usually solves with a speed and precision that is impressive as long as it goes right. But we don't know how it works, which we notice when it suddenly and inexplicably goes wrong. And therein lies perhaps the deepest insight for understanding how to apply our security principles: not to be impressed into carelessness. The genie in the bottle is powerful, but you are the one holding the cork.

Latest articles

Insights

Latest articles

AI Agents and the New Reality: Reflections from RSAC 2026

If you haven't started adapting to CRA, you're already behind.